Homelab Kubernetes Platform (RKE2 + Rancher)

The sovereign Kubernetes platform my AI work runs on — a production-style RKE2 cluster on Proxmox with full GitOps (Argo CD), a high-availability control plane, restore-drilled disaster recovery, Grafana 12 observability, encrypted secrets (SOPS/age), and a self-hosted LLM gateway + local inference layered on top.

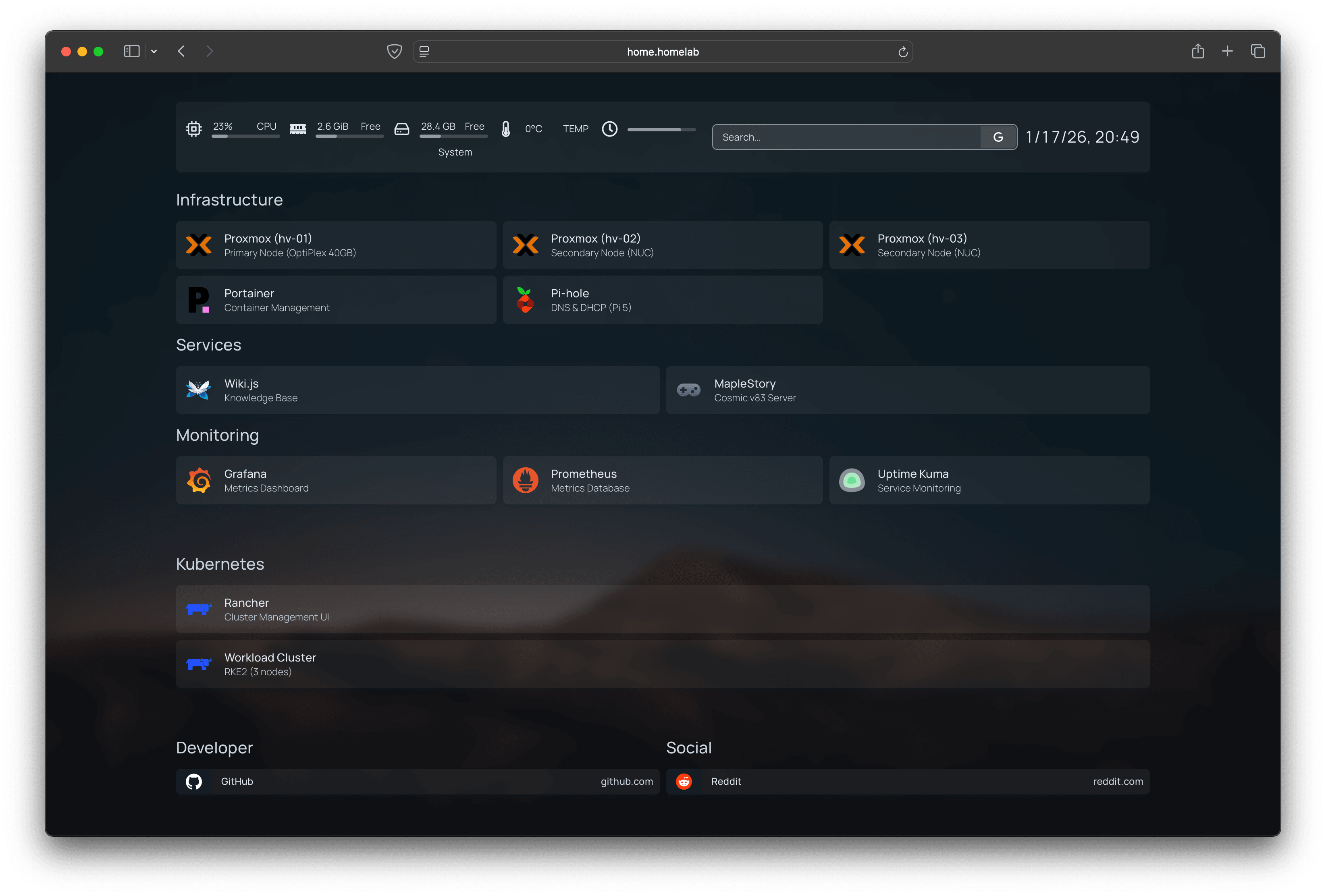

Overview

This homelab is the sovereign platform my AI work runs on — a production-style Kubernetes cluster on Proxmox (RKE2 + Rancher) that I build, run, and break myself. It is the substrate: a self-hosted LLM gateway, local inference, and retrieval services all sit on top of it, alongside the usual stateful apps.

Everything is managed as code and reconciled through GitOps (Kustomize + Argo CD) — desired state lives in Git, changes are reviewed and reproducible, and drift becomes visible and correctable. Internal services resolve through split-horizon DNS to stable hostnames instead of NodePorts.

What I care about most here is the boring, load-bearing part: a high-availability control plane (multi-node etcd), disaster recovery that is actually restore-drilled rather than just assumed, encrypted secrets, and scoped access. It is, in the end, infrastructure and operations — but it is the kind you can trust an AI platform to live on.

What this demonstrates

- Practical Kubernetes operations: deployments, services, ingress, PVCs, probes, rollouts

- DNS + ingress as the stable interface for internal services

- Storage topology awareness: designing around node-local persistence (

local-path,WaitForFirstConsumer) - GitOps foundations: Kustomize structure + Argo CD reconciliation, diffs, and controlled sync

- Three-layer backup strategy (Proxmox + Velero + etcd)

- Encrypted secrets management with SOPS/age

- SSH-hardened infrastructure with scoped access controls

- Grafana 12 observability with Prometheus metrics

- MCP server integration for AI-assisted cluster operations

- Substrate for AI workloads: a self-hosted LLM gateway (model routing, SSO/RBAC, cost attribution), local inference, and a retrieval (RAG) service running on the same platform

- Security hardening by default: OIDC single sign-on (Keycloak), default-deny access, key-only SSH, and SOPS/age-encrypted secrets

Operational Practices

Reliability & recovery

- Proxmox snapshots before risky changes

- Velero + Kopia for Kubernetes workload backup and restore

- etcd snapshots synced to NAS on schedule

- Documented rollback procedures

Observability

- Grafana 12 dashboards for cluster health and resource usage

- Prometheus metrics across all workloads

- Custom cost attribution dashboards

Security

- Key-only SSH authentication across all nodes

- Scoped kubeconfigs for different access levels

- Secrets encrypted at rest

- MCP servers scoped to read-only at every layer

- OIDC single sign-on (Keycloak) with role-mapped access to platform services